React Best Practices and Optimization

Optimizing React in a company setting: what we learned from an internal working group

There are two moments when a React project sends you the bill.

The first is when the app grows and you start noticing small slowdowns, “weird” renders, components doing more work than they should. The second is when the team grows and you realize every new feature comes with a variation on the theme: different patterns, different libraries, different conventions. The code works, sure. But onboarding is slow, and every decision has to be debated from scratch.

In our case, at some point we told ourselves something decidedly unheroic but extremely useful: instead of chasing optimization like a micro-benchmark scavenger hunt, let’s bring some order to the chaos. And do it in a shared, repeatable way.

That’s how an internal working group on React best practices and optimization was born, with a clear goal: produce a practical handbook of guidelines for developers, plus a few reusable building blocks (a minimal boilerplate, a bundling comparison, and a hands-on evaluation of the React Compiler).

The core idea: you don’t get performance by “optimizing”, you get it by using React well

The point we started from is almost obvious: baseline performance in React mostly comes from not abusing its lifecycle.

A lot of issues we label as “React is slow” are really “we’re triggering unnecessary renders” or “we put state in the wrong place”. And, crucially, as a team grows, those mistakes don’t stay isolated. They get repeated. They turn into habit.

So yes, the handbook talks about useMemo, useCallback, and includes a list of recommended libraries. But the real focus is elsewhere: making the way we build React apps sustainable, so they work today and are still maintainable six months from now.

How we worked: five sessions, sub-groups, and a not-so-short document

The group met five times. At the start we did what everyone does when they want to be efficient: a brainstorming session and a sweep of recurring pain points.

From there, we picked the areas worth investing in:

- state management and its impact on renders,

- memoization (yes, but with intention),

- choosing “standard” libraries so we don’t reinvent everything,

- a boilerplate to get moving faster,

- and a hands-on look at the React Compiler, because it was impossible to ignore the temptation.

The outcome was a fairly substantial handbook (around 40 pages), structured so you don’t have to read it cover to cover: you can use it as a reference whenever you need to make a decision in a project.

Most importantly, it wasn’t meant to be a “Bible”. It’s a shared starting point, meant to be improved over time.

Where the chaos starts: state (and the art of triggering renders by accident)

If we had to pick one area that causes the most trouble in React projects, it would be this: state.

Not because useState is hard to use. But because it’s easy to make choices that look harmless at first and then become expensive over time:

- moving into global state what could have stayed local,

- sharing state without a clear rationale,

- updating more than necessary,

- letting refreshes cascade through half the app because “it works anyway”.

In the handbook, we tried to make one idea explicit: where you place state determines how often you render, and how much those renders cost.

That’s why the material helps you reason about:

- when local state is the best choice (far more often than people think),

- when shared state is actually needed,

- and how to navigate options like Context, Reducer, Redux, and Zustand.

The goal isn’t “always pick X”. It’s having a repeatable mental model, so you don’t end up re-litigating the same decision from scratch every single time.

Memoization: useMemo and useCallback are not magic charms

This is where we get to the part many people automatically associate with “optimization”: memoization.

useMemo and useCallback are genuinely useful tools, but they’re also often used in two equally unfortunate ways:

- everywhere, “just to be safe”,

- nowhere, “because it’s premature anyway”.

In reality, they make sense when you know what you’re doing. Because memoization has a cost too: more code complexity, more dependencies to reason about, and the risk of “optimizing” something that didn’t need it in the first place.

The handbook tries to shift the conversation from “should we add it or not?” to “why are we adding it?”

- Are we avoiding a truly expensive computation?

- Are we stabilizing references to prevent cascading re-renders?

- Do we have evidence that this component renders frequently?

If the answer is no, the best option is often to keep the code simple.

Recommended libraries: fewer choices, fewer debates, real speed

Another thing that came through clearly is that, in day-to-day projects, we burn a lot of time on “non-core” problems: forms, validation, routing, tables, i18n, data fetching, testing.

They’re real problems, but they’re not the product. And more importantly: every time we pick different libraries, we increase the learning curve and make it harder for people to move between projects.

That’s why the working group produced a list of recommended libraries, not as a mandate but as a reference point:

- to reduce variability,

- to lower the cost of onboarding,

- to avoid homegrown solutions for problems that are already solved well.

It may sound like an “organizational” detail, but it has a direct impact on quality and on actual development speed.

A minimal boilerplate: starting well without building an internal framework (thankfully)

One of the concrete outcomes was a minimal boilerplate shared in Develer’s Git repository.

That choice was intentional: “minimal” means it gives you a common structure, but it doesn’t force application-level decisions on you (routing, sidebar, layout, and so on). It’s a base that each project can build on without having to start from scratch every time

Something else came up during the presentation too: the boilerplate will probably be “already outdated” soon.

And that’s normal. The value isn’t having the perfect boilerplate. The value is having a shared starting point that can evolve, instead of ten different beginnings across ten different repositories.

Webpack vs esbuild: the conservative choice that’s often the right one

On the bundling side, we compared Webpack and esbuild.

Esbuild is fast, modern, and very tempting. But in a company setting, decisions often have to account for a few extra factors:

- maturity,

- ecosystem,

- edge cases,

- long-term predictability.

That’s why the final decision was conservative: sticking with Webpack, not because it’s “better”, but because it’s more mature and less risky as a shared standard.

Sometimes the best optimization is simply not introducing one more variable.

React Compiler: interesting promises, an honest test, and an… inconclusive result

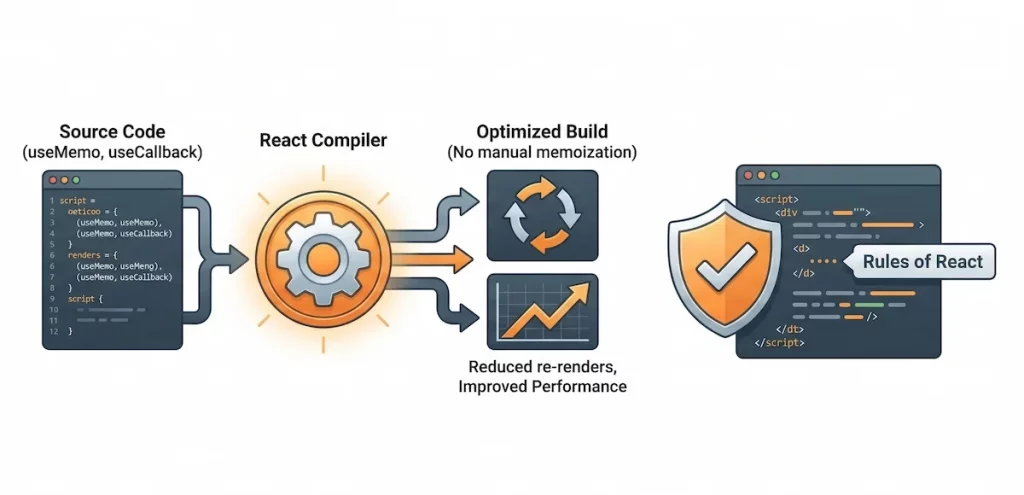

The most exploratory part of the work was testing the React Compiler, the tool that promises to automate memoization (think memo, useMemo) and reduce re-renders.

We learned two useful things.

First: the Compiler isn’t a patch. It only works well if the app follows React’s lifecycle rules rigorously. So it won’t save you from a messy architecture, if anything it forces you to be more disciplined.

Second: to evaluate it properly, you need the right kind of case study.

We tested it on a small React 18 app, already fairly simple and without obvious lag issues. In React DevTools, you could see memoization being applied even without explicit useMemo or useCallback in the code. But we didn’t observe dramatic performance gains: the app was probably too small for meaningful differences to show up.

On top of that, the Compiler comes with clear requirements: modern React, functional components and hooks, no class components. And full support is designed for React 19, while React 17/18 require additional dependencies with different behavior.

Final verdict? “Inconclusive test.”

And that’s perfectly fine, because an inconclusive result is still information. It means: we don’t adopt it on faith. If we want to evaluate it seriously, we’ll do it on a real, measurable case, where there’s an actual performance problem to solve.

The point isn’t to enforce rules, but to build a living handbook

Are these guidelines mandatory, or are they just documentation?

They’re a starting point, not a dogma. The ideal goal is to evolve them into a standard handbook, but that takes sustained work over time, real-world examples, and input from people with different experiences.

In practice, the handbook only becomes truly valuable if it stays alive: updated as technologies change and as we learn new lessons from real projects

Conclusion: optimizing React means making the project more predictable

If we had to sum up the point of this work in a single sentence, it would be this: optimization isn’t a final step, it’s a way of building.

Managing state well, avoiding unnecessary renders, using memoization with intention, standardizing non-core choices, starting from a shared structure: none of this is glamorous, but it’s what makes projects more predictable and sustainable.

And if that also leads to a lighter bundle or faster rendering, even better. But the real optimization is the one that reduces chaos. In a company setting, reducing chaos is basically a superpower.

The team

We’d like to thank the entire working group: Alessandro Mamusa, Giuseppe Chiarella, Nadir Sampaoli, Emilio Veloci, Matteo Bonini, Federico La Rovere, Roberto Gianassi, Diego De Angelis, and Lorenzo Savini.